FreeSync vs. G-Sync 2022: Which Variable Refresh Tech Is Best

AMD and Nvidia battle for smooth gaming supremacy.

For the past few years, the best gaming monitors have enjoyed something of a renaissance. Before Adaptive-Sync technology appeared in the form of Nvidia G-Sync and AMD FreeSync, the only thing performance-seeking gamers could hope for was higher resolutions or a refresh rate above 60 Hz. Today, not only do we have monitors routinely operating at 144 Hz and higher, Nvidia and AMD have both been updating their respective technologies. In this age of gaming displays, which Adaptive-Sync tech reigns supreme in the battle between FreeSync vs. G-Sync?

We've also got next-generation graphics cards arriving, like the Nvidia GeForce RTX 4090 and Ada Lovelace GPUs with DLSS 3 technology that can potentially double framerates, even at 4K. AMD's RDNA 3 and Radeon RX 7000-series GPUs are also slated to arrive shortly, and should also boost performance and make higher quality displays more useful.

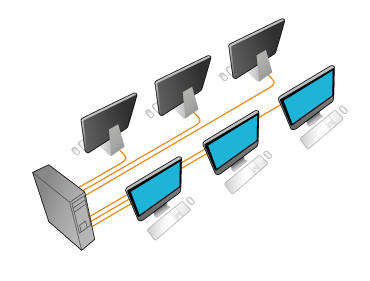

For the uninitiated, Adaptive-Sync means that the monitor’s refresh cycle is synced with the rate at which the connected PC’s graphics card renders each frame of video, even if that rate changes. Games render each frame sequentially, and the rate can vary widely depending on the complexity of the scene being rendered. With a fixed monitor refresh rate, the screen updates at a specific cadence, like 60 times per second for a 60 Hz display. What happens if a new frame is ready before the scheduled update?

There are a few options. One is to have the GPU and monitor wait to send the new frame to the display, which increases system latency and can make games feel less responsive. Another option is for the GPU to send the new frame to the monitor and let it immediately start drawing it onto the screen — this is called tearing and the result is shown in the above image.

G-Sync (for Nvidia-based GPUs) and FreeSync (AMD GPUs and potentially Intel GPUs as well) aim to solve the above problems, providing maximum performance, minimal latency, and no tearing. The GPU sends a "frame ready" signal to a G-Sync or FreeSync monitor, which draws the new frame and then awaits the next "frame ready" signal, thereby eliminating any tearing artifacts.

Today, you’ll find countless monitors — even non-gaming ones — boasting some flavor of G-Sync, FreeSync, or even both. If you haven’t committed to a graphics card technology yet or have the option to use either, you might be wondering which is best when considering FreeSync vs. G-Sync. And if you have the option of using either, will one offer a greater gaming advantage than the other?

FreeSync vs. G-Sync

Fundamentally, G-Sync and FreeSync are the same. They both sync the monitor to the graphics card and let that component control the refresh rate on a continuously variable basis. To meet each certification, a monitor has to meet the respective requirements detailed above, but a monitor can also go beyond the requirements. For example, a FreeSync monitor isn't required to have HDR but some do, and some FreeSync monitors reduce motion blur via a proprietary partner tech, like Asus ELMB Sync.

Can the user see a difference between the two? In our experience, there is no visual difference in FreeSync vs. G-Sync when frame rates are the same and the monitor quality is the same. Achieving such parity however is far from guaranteed. Memonitor reviews have highlighted a few things that can add or subtract from the gaming experience that have little to nothing to do with refresh rates and Adaptive-Sync technologies.

The HDR quality is also subjective at this time, although G-Sync Ultimate claims to offer "lifelike HDR." It then comes down to the feature set of the rival technologies. What does all this mean? Let’s take a look.

G-Sync Features

G-Sync monitors typically carry a price premium because they contain the extra hardware needed to support Nvidia’s version of adaptive refresh. When G-Sync was new (Nvidia introduced it in 2013), it would cost you about $200 extra to purchase the G-Sync version of a display, all other features and specs being the same. Today, the gap is closer to $100.

However, FreeSync monitors can be also certified as G-Sync Compatible. The certification can happen retroactively, and it means a monitor can run G-Sync within Nvidia's parameters, despite lacking Nvidia' proprietary scaler hardware. A visit to Nvidia’s website reveals a list of monitors that have been certified to run G-Sync. You can technically run G-Sync on a monitor that's not G-Sync Compatible-certified, but the quality and experience are not guaranteed. For more, see our articles on How to Run G-Sync on a FreeSync Monitor and Should You Care if Your Monitor's Certified G-Sync Compatible?

There are a few guarantees you get with G-Sync monitors that aren’t always available in their FreeSync counterparts. One is blur-reduction (ULMB) in the form of a backlight strobe. ULMB is Nvidia’s name for this feature; some FreeSync monitors also have it under a different name. While this works in place of Adaptive-Sync, some prefer it, perceiving it to have lower input lag. We haven’t been able to substantiate this in testing. However, when you run at 100 frames per second (fps) or higher, blur is typically a non-issue and input lag is super-low, so you might as well keep things tight with G-Sync engaged.

G-Sync also guarantees that you will never see a frame tear even at the lowest refresh rates. Below 30 Hz, G-Sync monitors double the frame renders (thereby doubling the refresh rate) to keep them running in the adaptive refresh range.

FreeSync has a price advantage over G-Sync because it uses an open-source standard created by VESA, Adaptive-Sync, which is also part of VESA’s DisplayPort spec.

Any DisplayPort interface version 1.2a or higher can support adaptive refresh rates. While a manufacturer may choose not to implement it, the hardware is there already, so there’s no additional production cost for the maker to implement FreeSync. FreeSync can also work with HDMI 2.0b and later. (For help understanding which is best for gaming, see our DisplayPort vs. HDMI(opens in new tab) analysis.)

Because of its open nature, FreeSync implementations vary widely between monitors. Budget displays will typically get FreeSync and a 60 Hz or greater refresh rate. The lowest-priced displays likely won’t get blur-reduction, and the lower limit of the Adaptive-Sync range might be just 48 Hz. However, there are FreeSync (as well as G-Sync) displays that operate at 30 Hz or, according to AMD, even lower.

FreeSync Adaptive-Sync works just as well as G-Sync in theory. In practice, the cheapest FreeSync displays (particularly older models) may not look quite as nice. Pricier FreeSync monitors add blur reduction and Low Framerate Compensation (LFC) to compete better against their G-Sync counterparts.

And, again, you can get G-Sync running on a FreeSync monitor without any Nvidia certification, but performance may falter. These days, monitors are opting for FreeSync support because it's effectively free, and higher quality displays work with Nvidia to ensure they're also G-Sync compatible.

FreeSync vs. G-Sync: Which Is Better for HDR?

To add even more choices to a potentially confusing market, AMD and Nvidia have upped the game with new versions of their Adaptive-Sync technologies. This is justified, rightly so, by some important additions to display tech, namely HDR and extended color.

On the Nvidia side, a monitor can support G-Sync with HDR and extended color without earning the “Ultimate” certification. Nvidia assigns that moniker to monitors with the capability to offer what Nvidia deems "lifelike HDR." Exact requirements are vague, but Nvidia clarified the G-Sync Ultimate spec to Tom's Hardware, telling us that these monitors are supposed to be factory-calibrated for the HDR color space, P3, while offering 144Hz and higher refresh rates, overdrive, "optimized latency" and "best-in-class" image quality and HDR support.

Meanwhile, a monitor must support HDR, extended color, hit a minimum of 120 Hz at 1080p resolution, and have LFC for it to list FreeSync Premium on its specs sheet. If you’re wondering about FreeSync 2, AMD has supplanted that with FreeSync Premium Pro. Functionally, they are the same.

Here’s another fact: If you have an HDR monitor (for recommendations, see our article on picking the best HDR monitor) that supports FreeSync with HDR, there’s a good chance it will also support G-Sync with HDR — and both can function without HDR as well.

And what of FreeSync Premium Pro? It’s the same situation as G-Sync Ultimate in that it doesn’t offer anything new to the core Adaptive-Sync tech. FreeSync Premium Pro simply means AMD has certified that monitor to provide a premium experience with at least a 120 Hz refresh rate, LFC, and HDR.

Naturally, the higher quality components necessary for FreeSync Premium Pro cost more than basic components. That means that while FreeSync technically doesn't come with a cost, FreeSync Premium Pro monitors will be more expensive than lesser monitors.

Chances are that if the FreeSync monitor supports HDR, it will likely work with G-Sync (Nvidia-certified or not) too.

Conclusion

So which is better: G-Sync or FreeSync? With the features being so similar there is no inherent reason to select a particular monitor. Both technologies produces similar results, so the contest is mostly a wash at this point. There are a few disclaimers, however.

If you purchase a G-Sync monitor, you will only have support for its adaptive-sync features with a GeForce graphics card. You're effectively locked into buying Nvidia GPUs as long as you want to get the most out of your monitor. With a FreeSync monitor, particularly the newer, higher quality variants that meet the FreeSync Premium Pro certification, you're often free to use AMD or Nvidia graphics cards.

Those shopping for a PC monitor will have to decide which additional features are most important to them. How high should the refresh rate be? How much resolution can your graphics card handle? Is high brightness important? Do you want HDR and extended color?

It’s the combination of these elements that impacts the gaming experience, not simply which adaptive sync technology is in use. Ultimately, the more you spend, the better gaming monitor you’ll get. These days, when it comes to displays, you do get what you pay for. But you don't have pay thousands to get a good, smooth gaming experience.

Source:https://www.tomshardware.com/features/gsync-vs-freesync-nvidia-amd-monitorDate: 06.12.2022г.